Blackwell Arrives at the Workstation

Nvidia announced the RTX PRO 8000 series this week, bringing its Blackwell architecture to professional workstations for the first time. These are the same advanced GPU designs that power Nvidia’s data center chips, now available to a different class of buyer.

The jump from the previous-gen RTX 6000 Ada Generation is substantial. Nvidia claims up to 2.5x the AI training performance and 2x the rendering performance, all while keeping the same 300-watt power draw.

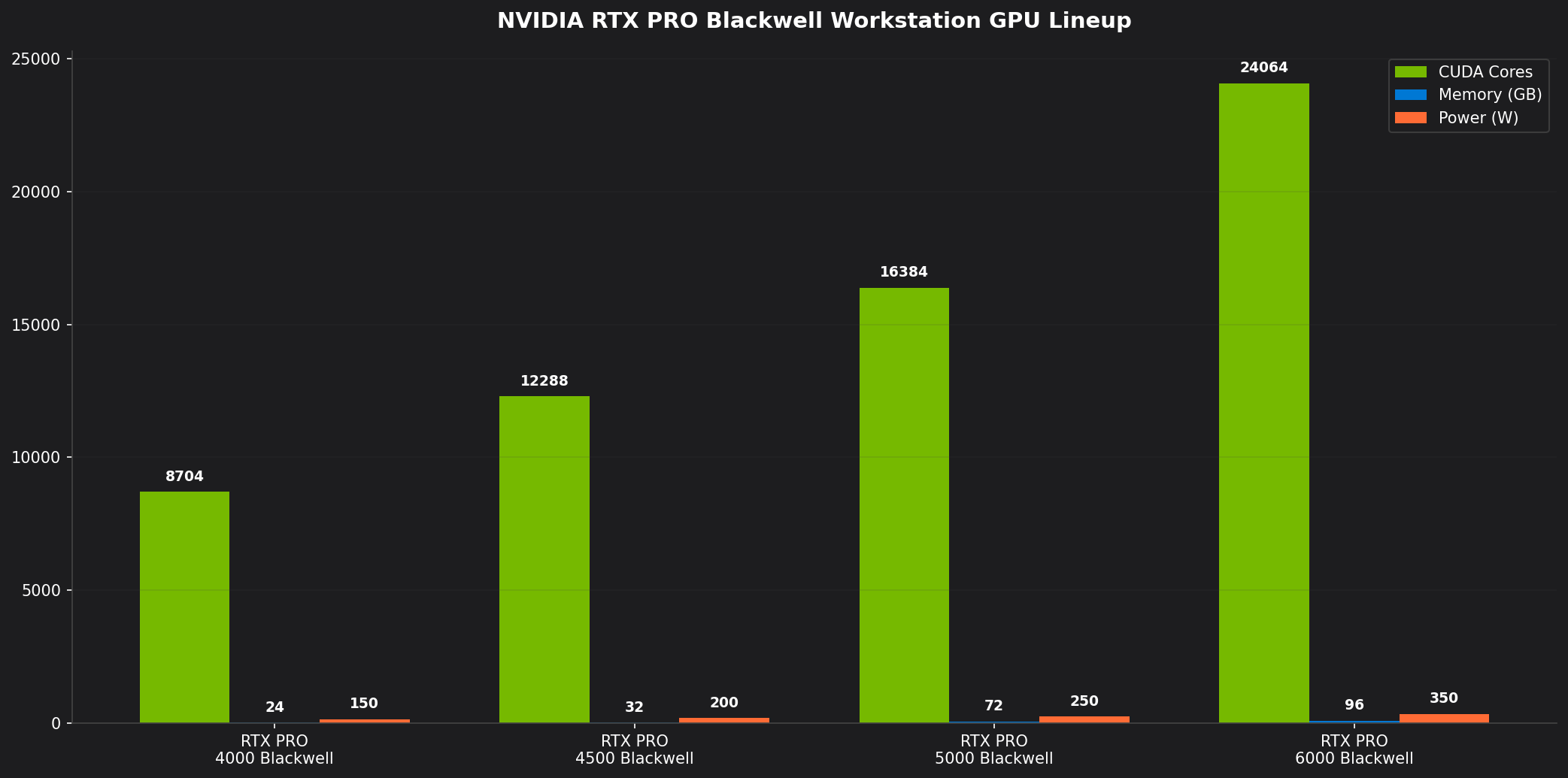

Architecture and Specifications

The RTX PRO 8000 is built on a monolithic Blackwell die manufactured using TSMC’s 4NP process node. The GPU features 21,760 CUDA cores, 680 AI Tensor cores (fifth generation), and 170 ray-tracing cores (fourth generation). Memory consists of 96 GB of GDDR7 with a 384-bit bus, delivering memory bandwidth of approximately 1,536 GB/s, a 50 percent improvement over the previous generation.

| Specification | RTX PRO 8000 | RTX 6000 Ada (Previous Gen) |

|————–|————-|—————————|

| CUDA Cores | 21,760 | 18,176 |

| Tensor Cores | 680 (5th Gen) | 568 (4th Gen) |

| Memory | 96 GB GDDR7 | 48 GB GDDR6 |

| Memory Bandwidth | 1,536 GB/s | 960 GB/s |

| TDP | 300W | 300W |

| Architecture | Blackwell | Ada Lovelace |

The memory doubling is the spec that matters most for professional workloads. Fine-tuning large language models, high-resolution 3D rendering, and complex simulations all benefit from holding larger datasets in GPU memory instead of spilling into slower system RAM or splitting across multiple cards.

Target Applications and Workloads

Nvidia is positioning the RTX PRO 8000 as the go-to GPU for professional workstations across several areas:

AI Training and Inference: The card handles fine-tuning large language models, computer vision training, and generative AI workloads directly on a workstation. For companies that can’t or won’t use cloud infrastructure, it’s a viable on-premise option. Nvidia demoed the GPU fine-tuning a 70-billion parameter model in under 12 hours on a single card — a job that previously needed multiple GPUs.

3D Rendering and Visualization: Improved ray-tracing cores deliver real-time path tracing good enough for final-quality rendering in many cases. Pixar, Autodesk, and Dassault Systemes have all announced optimized support in their flagship applications.

Scientific Computing: CUDA remains the dominant platform for scientific computing, and the enhanced Tensor cores accelerate matrix operations used in molecular dynamics, climate modeling, and financial risk analysis. CERN and the Max Planck Institute have already placed orders.

Autonomous Vehicles and Robotics: The GPU supports Nvidia’s Omniverse platform for creating high-fidelity digital twins used in autonomous vehicle simulation and robotics training. Mercedes-Benz and Toyota are reportedly deploying RTX PRO 8000-based workstations for their simulation pipelines.

Competitive Landscape

Nvidia has long dominated the professional GPU market, but the competition is getting louder. AMD’s Radeon PRO W7900 series offers strong performance at a lower price point, particularly for OpenCL and ROCm workloads. Intel’s Gaudi and Ponte Vecchio architectures are also gaining ground in enterprise AI training.

Nvidia’s moat, though, runs deeper than specs. CUDA has been in development for over 15 years, and most AI frameworks, rendering engines, and scientific applications are optimized for it first. Migrating workloads to AMD’s ROCm or Intel’s oneAPI requires real code changes and testing — a barrier many organizations won’t cross.

“Nvidia’s real advantage is not the silicon. It’s the ecosystem,” said Dr. Linda Park, professor of computer architecture at MIT. “Every researcher, every engineer, every developer knows CUDA. The switching cost isn’t just financial — it’s organizational. That’s very hard to compete with.”

Pricing and Availability

The RTX PRO 8000 carries an $8,999 MSRP, aimed squarely at professional and enterprise buyers. OEM partners like Dell, HP, and Lenovo will likely get volume pricing slightly below that. Availability is set for Q3 2026, though initial supply will be tight as Nvidia prioritizes data center Blackwell production.

For comparison, the previous-gen RTX 6000 Ada launched at $6,799 and has held its value well on the secondary market. The price jump reflects both the increased capability and the strong demand for professional AI hardware.

Impact on the AI Hardware Ecosystem

The RTX PRO 8000’s reach extends past the workstation market. By making Blackwell-level AI training available at the workstation level, Nvidia is putting advanced AI development within reach of organizations that previously needed expensive cloud GPU time.

Startups and research labs with limited cloud budgets will be able to iterate faster, which could accelerate the pace of AI innovation. Enterprises with strict data privacy rules can train and deploy models without sending sensitive data to third-party providers.

Nvidia’s grip on the GPU market — approaching 90 percent share in AI accelerators — isn’t loosening. The company’s market cap sits above $3.5 trillion. The RTX PRO 8000 just reinforces its position as the infrastructure provider for the AI era.

Looking Ahead

The RTX PRO 8000 is just the first wave. Nvidia has hinted at additional variants — a higher-end model with 192 GB of memory and a more affordable entry-level option — both in development. New software tools optimized for Blackwell are expected at this year’s GTC conference.

For professional users, the message is straightforward: AI-powered workstations are here, and Nvidia is setting the standard.

References

- https://www.nvidia.com/en-us/professional-graphics/rtx-pro-8000/

- https://www.nvidia.com/gtc/

- https://www.anandtech.com/nvidia-rtx-pro-8000-review

- https://www.tomshardware.com/professional/nvidia-rtx-pro-8000

- https://www.reuters.com/technology/nvidia-blackwell-workstation

- https://www.amd.com/en/products/professional-graphics

- https://www.intel.com/content/www/us/en/products/accelerators.html