The AI Pricing War Reaches a New Phase

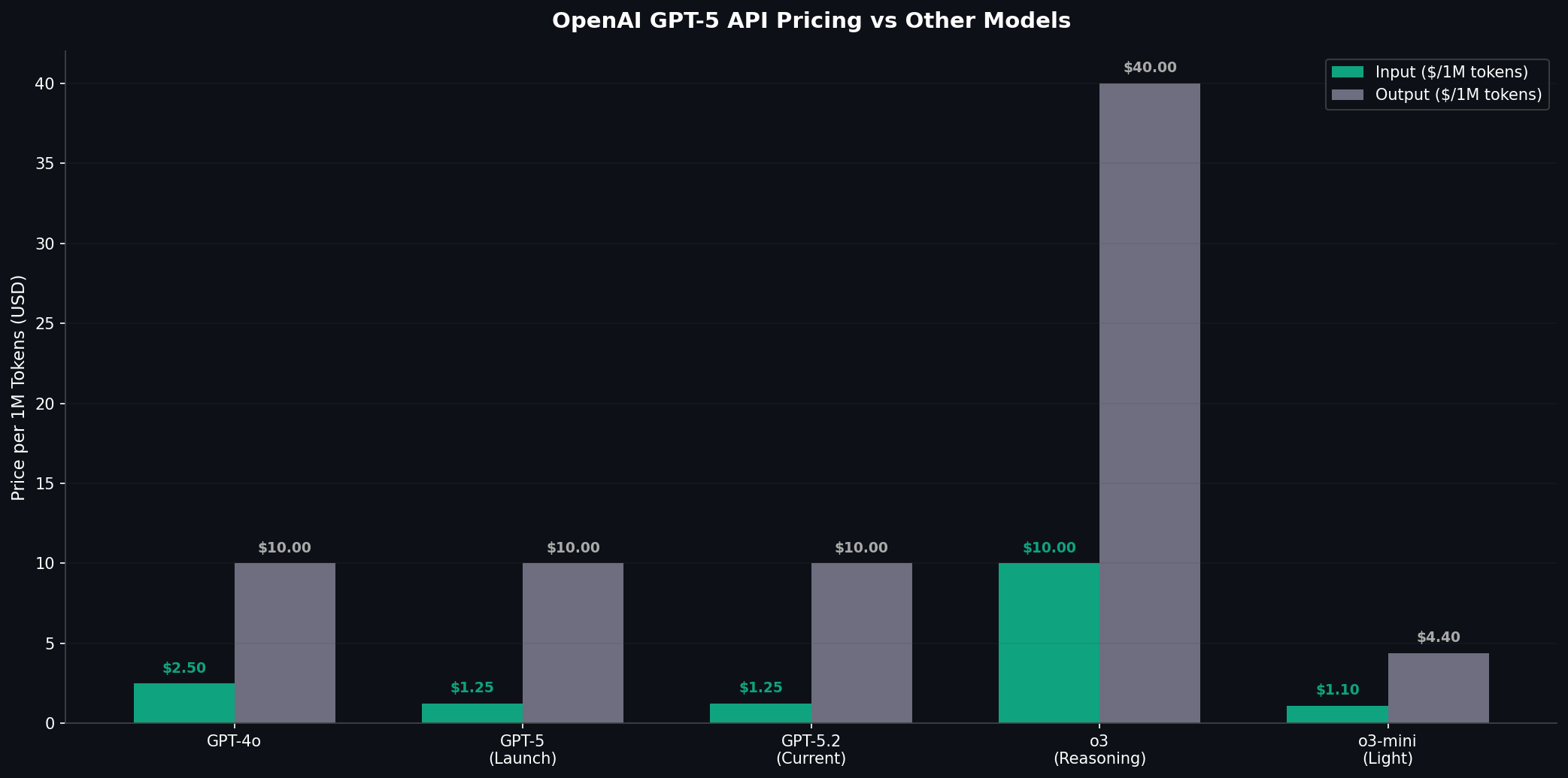

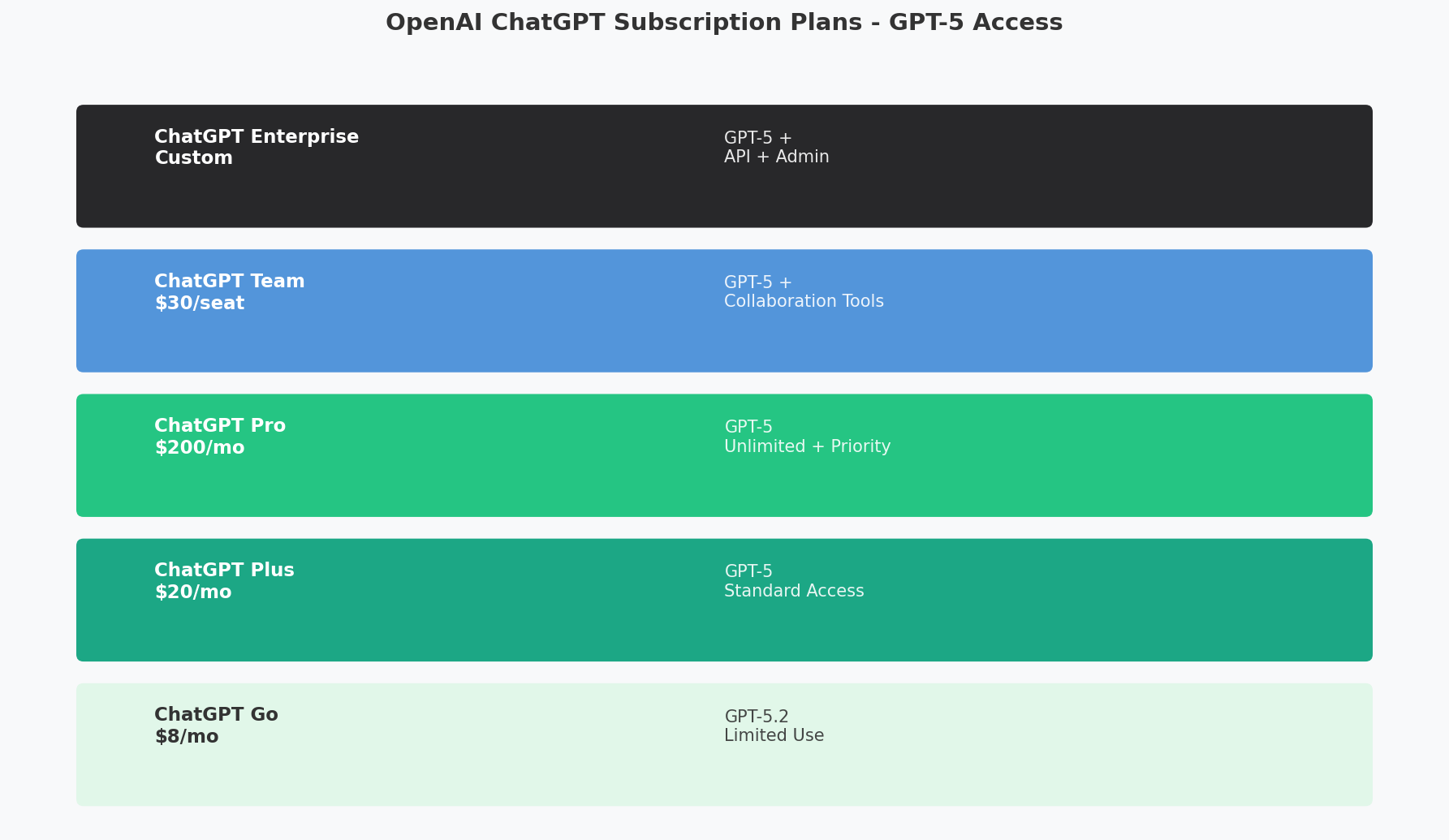

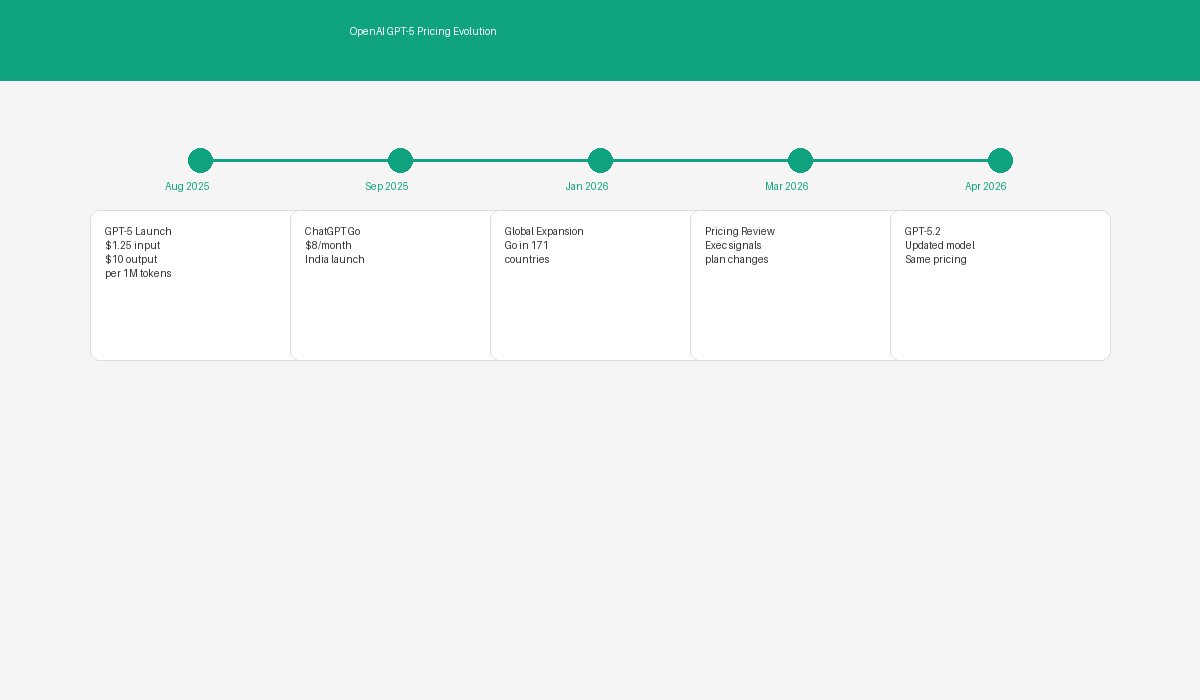

OpenAI just cut GPT-5 API prices significantly — input tokens down about 30 percent, output tokens down 25 percent across most model variants. The changes are effective immediately. ChatGPT subscription tiers got adjusted too, with new mid-range options slotted between the free and Pro tiers.

The cuts are a response to competitive pressure from a growing field of AI labs delivering comparable capabilities at much lower prices. The AI model market used to be a small club. It’s now a free-for-all where capability, cost, and distribution are all up for grabs.

The DeepSeek Disruption

The main trigger for OpenAI’s move is DeepSeek, a Chinese AI lab that upended large language model economics. DeepSeek’s V4 model, launched earlier this year, matches GPT-5 and Claude Opus 4.7 on benchmarks at a price 9 to 18 times lower than U.S.-based competitors.

DeepSeek pulled this off through architectural innovations — a mixture-of-experts design that activates only a fraction of parameters per token — and more efficient training methods that slashed compute requirements. The company also open-sourced key components, letting the broader community build on top of its approach.

| Model | Input Price (per 1M tokens) | Output Price (per 1M tokens) |

|——-|—————————|—————————-|

| GPT-5 (new pricing) | $2.50 | $10.00 |

| GPT-5 (old pricing) | $3.50 | $14.00 |

| DeepSeek V4 | $0.27 | $0.55 |

| Claude Opus 4.7 | $3.75 | $15.00 |

| Claude Sonnet 4.5 | $0.75 | $3.75 |

The pricing differential has forced a fundamental rethinking of AI model economics across the industry. If a competitor can deliver comparable performance at one-tenth the cost, the incumbents must either match the pricing or demonstrate sufficient differentiation to justify the premium. OpenAI’s price cuts represent the former strategy.

Anthropic’s Competitive Position

Anthropic has been aggressive too. Claude Opus 4.7 consistently ranks at or near the top of independent benchmarks, and Claude Sonnet 4.5 has become the workhorse for enterprises that want strong performance at a reasonable price.

Anthropic’s strategy leans on reliability, safety, and enterprise trust rather than price alone. The company has invested heavily in constitutional AI — embedding safety principles directly into the training process — and positioned Claude as the go-to for regulated industries like healthcare, finance, and government.

Even Anthropic has felt the squeeze. The company recently introduced a Claude Haiku tier at $0.15 per million input tokens, going after the cost-sensitive segment DeepSeek has been winning over.

Impact on Developers and Enterprises

For organizations processing millions or billions of tokens daily, a 30 percent cut in input pricing adds up fast.

“We were spending approximately $40,000 per month on GPT-5 API calls for our customer service automation,” said David Kowalski, CTO of a mid-sized e-commerce platform. “The new pricing brings that down to about $28,000. That’s a meaningful difference, and it makes AI-powered features viable for use cases we previously wrote off as too expensive.”

For developers building AI apps, the pricing war lowers the barrier to entry. Startup founders who worried about model costs eating their margins can now build more ambitious products. The broader ecosystem benefits from more experimentation.

But there’s a flip side. Training and running large language models requires enormous computational resources, and only companies with deep pockets and efficient infrastructure can sustain aggressive pricing. Smaller AI labs without comparable scale may not survive the price war, which could lead to market consolidation.

OpenAI’s Defensible Advantages

Despite the pressure, OpenAI still holds several advantages that go beyond price:

Distribution through ChatGPT: Over 500 million weekly active users make ChatGPT the most widely used AI application on the planet. The subscription business provides a recurring revenue stream less sensitive to API pricing. The new mid-tier option at $14.99/month is designed to capture a broader range of consumers.

Developer Ecosystem: The GPT Store, function calling, and agent frameworks create switching costs beyond model pricing. Developers who’ve built on OpenAI’s platform face real costs migrating elsewhere.

Brand and Trust: OpenAI is still the most recognized name in AI. For many enterprise buyers, choosing OpenAI is the path of least resistance and lowest perceived risk.

Integration and Tooling: OpenAI’s SDKs, documentation, and support infrastructure make it easier to build with GPT models than with competing alternatives.

Is Price Competition Good for the Industry?

Lower model costs democratize access to advanced AI. Small businesses, researchers, and individual developers who were previously priced out can now experiment with state-of-the-art models. That should accelerate innovation and lead to applications that wouldn’t exist otherwise.

Lower prices also force efficiency. The push to cut costs drives innovation in model architecture, training methods, and inference optimization — all of which benefit the field. DeepSeek’s success has already sparked a wave of research into more efficient training, including new data curation methods, loss function optimizations, and hardware-software co-design.

The risk is a race to the bottom. If model prices fall below production costs, companies will need to subsidize AI operations from other revenue — sustainable only for the largest, best-capitalized players. That could lead to consolidation and less competition long-term.

There are quality concerns too. If companies cut training data, compute budgets, or safety testing to save money, the resulting models will be less capable and less safe. The industry needs to balance efficiency with maintaining standards.

The Road Ahead

The pricing war isn’t over. Google’s Gemini models, Meta’s open-source Llama family, and a growing number of specialized AI labs are all competing for market share. The winners will be companies that combine competitive pricing with differentiated capabilities, strong distribution, and sustainable unit economics.

OpenAI’s price cut reflects a practical acknowledgment: the AI market has moved past the era of premium pricing for premium models. The future belongs to companies that can deliver the best combination of capability, cost, and reliability at scale.

For developers and enterprises, the takeaway is simple. The cost of intelligence keeps falling, and the applications you can build with it keep expanding. The question isn’t whether to use AI anymore — it’s how to use it best.

References

- https://openai.com/api/pricing/

- https://platform.openai.com/docs/models/gpt-5

- https://www.deepseek.com/pricing

- https://www.anthropic.com/pricing

- https://www.reuters.com/technology/ai-pricing-competition

- https://techcrunch.com/openai-gpt5-price-cut

- https://www.theverge.com/ai-pricing-war-2026

- https://arxiv.org/abs/deepseek-v4-architecture